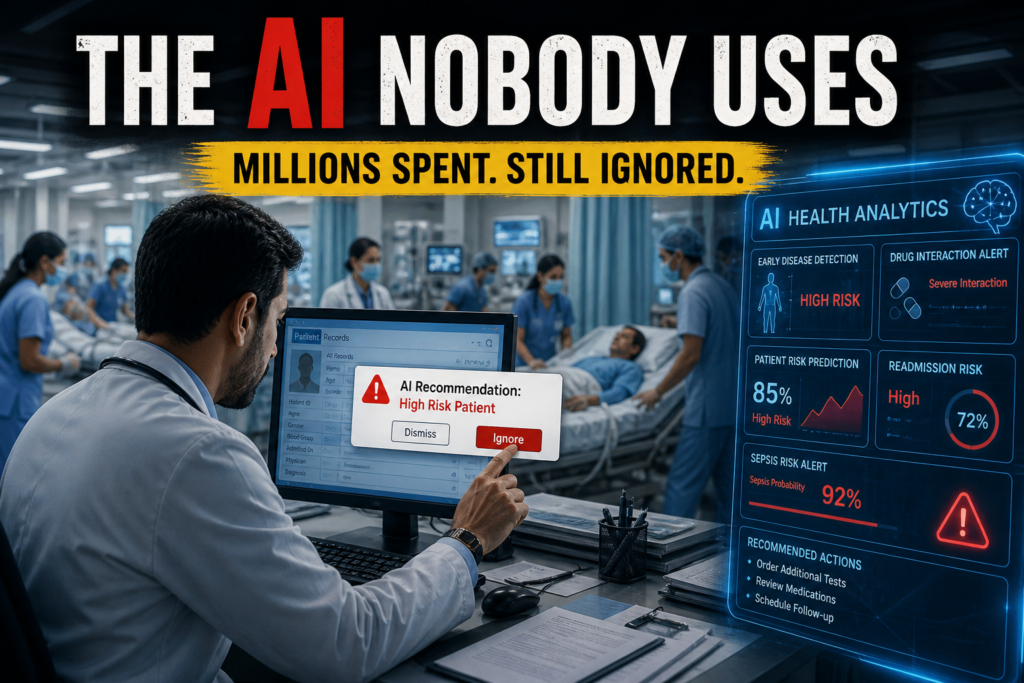

Hospitals around the world are buying some of the most advanced AI ever built for medicine. Much of it is sitting unused. This is not a technology problem. It is a human one.

Imagine a hospital that spends millions deploying an AI system capable of spotting early signs of disease, flagging dangerous drug combinations, and predicting which patients are most at risk. The system goes live. The doctors keep working the way they always have. The AI sits in a corner of the screen, largely ignored. Six months later, someone in management asks whether the investment was worth it. Nobody has a clean answer. This is not a hypothetical. It is happening in hospitals across the world right now, and understanding why requires looking not at the AI itself but at the rooms, routines, and pressures it was dropped into.

Research consistently shows that even technically accurate AI systems fail in practice when they are not built around how doctors actually work. A 2025 implementation study drawing on data from real-world healthcare deployments found that poorly designed AI tools generate alert override rates of between 90 and 96 percent meaning doctors are ignoring nearly every recommendation the AI makes. This is not stubbornness. It is a rational response to a system that keeps interrupting without adding real value. Research from Vanderbilt University Medical Center confirms that up to 90 percent of clinical alert notifications in hospitals are already ignored because there are too many of them and too few are genuinely useful.

The deeper problem is how these tools are built in the first place. About 82 percent of clinical AI development efforts consult doctors only at later stages of the process, often after the core system has already been designed. Half of all projects gather feedback from actual users only after the product is complete, which is precisely the point at which fixing a fundamental workflow mismatch becomes extremely expensive. The AI gets built by engineers. The doctors are told to use it. And then everyone is surprised when they don’t.

“80 percent of healthcare AI projects never move beyond the pilot phase. The gap between proof-of-concept and scaled, working deployment is where most of the money disappears.”

The $4 billion cautionary tale

The most dramatic version of this story belongs to IBM Watson for Oncology. In 2012, IBM partnered with Memorial Sloan Kettering Cancer Center in New York with an ambitious goal: train an AI to deliver expert cancer treatment recommendations to doctors anywhere in the world, democratising the knowledge of the best oncologists on the planet. It was a genuinely beautiful idea. IBM invested over $4 billion in acquisitions to build Watson Health, including $1 billion for Merge Healthcare and $2.6 billion for Truven Health Analytics. MD Anderson Cancer Center in Texas spent $62 million on a separate Watson-powered project before cancelling it in 2016 without ever launching a commercial product. By 2018, more than a dozen topped or scaled back their oncology projects. In 2022, IBM sold Watson Health to a private equity firm. IBM partners had a firm for approximately $1 billion, a loss, by most estimates, of several billion dollars.

What went wrong? The system had been trained largely on hypothetical cases curated by a handful of elite institutions. When it encountered real patients at different hospitals, patients with messier histories, different treatment environments, different clinical cultures it struggled badly. Its recommendations sometimes directly conflicted with what local oncologists knew to be right for their patients. The fundamental mismatch, as IEEE Spectrum reported, was between how machines learn and how doctors actually work. The AI had been designed in isolation from the human reality it was supposed to serve.

When the numbers look good but the patients don’t

One of the most persistent traps in healthcare AI is the gap between performance in a clean test environment and performance in an actual hospital. AI models that achieve 95 percent accuracy during testing routinely drop to around 70 percent in real clinical use, a 25-point fall that is not a technology glitch but a reflection of how different real wards are from controlled research datasets. Epic’s widely-deployed sepsis prediction model is a well-documented example. External validation at Michigan Medicine found the model performing significantly below Epic’s own published figures, with a sensitivity of only 33 percent at recommended thresholds. In practical terms, doctors would need to investigate 109 flagged patients just to find one who actually needed sepsis intervention burying them in false alarms rather than helping them catch critical cases faster. A comprehensive review published in The Lancet Digital Health in 2024, which examined nearly 2,600 records on healthcare AI, found only 18 randomised controlled trials that met criteria for measuring actual patient outcomes. Of those, only 63 percent reported any measurable benefit to patients.

The hidden cost nobody puts in the brochure

There is also a financial reality that hospitals tend to discover only after signing the contract. Actual AI deployment costs in healthcare run 30 to 50 percent above the initial quoted price once data migration, workflow redesign, staff training, and ongoing optimisation are included. A project quoted at $500,000 realistically costs between $650,000 and $750,000 by the time it is fully operational. Modern Healthcare’s 2024 analysis found that despite widespread expectations of cost savings, most hospitals that had adopted AI were unable to calculate any quantifiable financial return. According to Gartner research on AI model failure, 85 percent of AI models fail because of poor data quality, and 80 percent of healthcare AI projects never move beyond the pilot phase. The gap between a proof-of-concept demonstration and a scaled, working system is where most of the money quietly disappears.

What actually works — and why

None of this means AI has no place in medicine. It clearly does. Cleveland Clinic expanded an AI-based sepsis detection system across its entire hospital network in 2024 and 2025, built on a neural network trained on over 760,000 patient encounters. Hospitals that deployed AI for billing simplification and appointment scheduling practical tasks that fit naturally into existing daily workflows saw some of the strongest adoption numbers in recent American Hospital Association survey data. A McKinsey survey from the fourth quarter of 2024 found that 64 percent of healthcare organisations that had implemented generative AI reported a positive return on investment, with the best deployments returning up to $3.20 for every dollar spent. The pattern across every successful case is the same: the AI was designed around an existing clinical process rather than dropped on top of it, doctors were involved from the very beginning rather than consulted at the end, and the tool was tested in the actual environment where it would be used, not just in a laboratory with clean and tidy data.

The real diagnosis

Healthcare AI is not failing because the technology is bad. Some of it is genuinely remarkable. It is failing because the industry keeps treating implementation as the easy part of the step that happens after the real engineering work is done. In reality, it is the hardest part. A tool that a doctor never opens, no matter how sophisticated it is, does nothing for the patient lying in the bed. The hospitals spending millions on AI that sits unused are not being failed by the software. They are being failed by a process that builds for a boardroom demonstration and not for a Tuesday morning ward round, for an investor pitch and not for a nurse managing seven patients at once with no time to switch between screens.

The technology was ready. The question the industry still has not fully answered is whether it was ready to build something that people would actually use.

Subscribe Deshwale on YouTube